- AIpreneur Insights

- Posts

- Why Most AI Deployments in Healthcare Fail to Scale...

Why Most AI Deployments in Healthcare Fail to Scale...

How safe implementation leads to real ROI.

Welcome, AI Entrepreneurs!

AI adoption in healthcare is accelerating fast.

Hospitals and health systems are under pressure to move quickly, show results, and keep up with competitors. But speed alone does not equal progress.

When AI is rushed into clinical environments without proper validation, governance, and clinician engagement, the risks compound quickly.

In today’s issue, I break down the difference between rushed AI deployment and safe implementation, and why the long path is often the only sustainable one.

In today’s AIpreneurs Insights:

Spotlight of the Week: Safe vs Rushed AI Rollout in Healthcare

Become the Human in the Loop in Healthcare AI

Top 3 AI Business Search Trends of the Week

Top 5 AI Tools That Support Safe and Responsible AI Rollout in Healthcare

Free Resource: The Human-in-the-Loop Healthcare AI Decision Guide

Safe vs Rushed AI Rollout in Healthcare

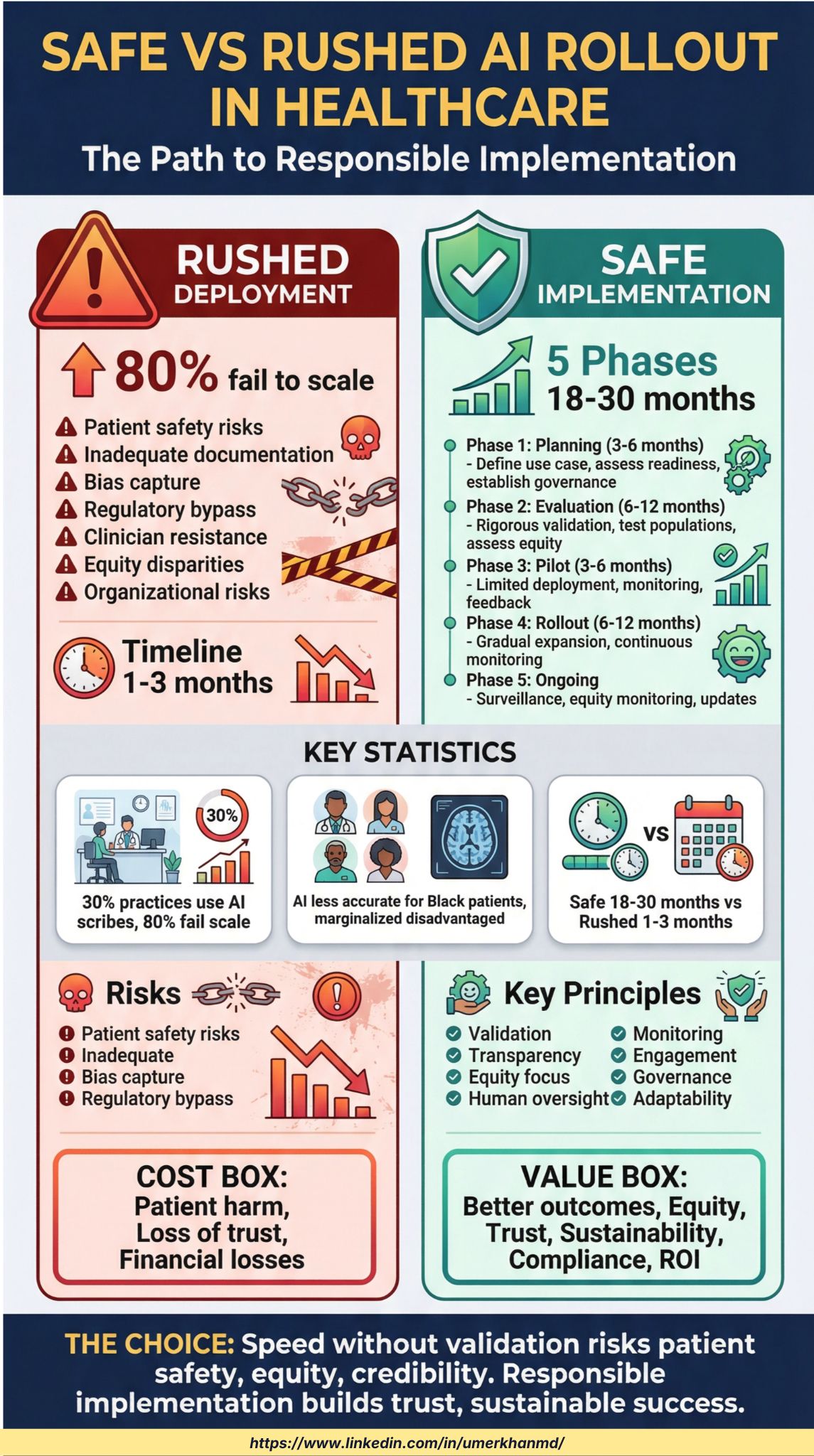

This week’s infographic compares what happens when AI is deployed quickly versus when it is implemented responsibly.

Rushed deployments often skip validation, clinician involvement, and equity checks. While they may look successful early, most fail to scale and introduce patient safety, bias, and regulatory risks.

Safe implementation follows a phased approach that includes planning, evaluation, pilot testing, gradual rollout, and ongoing monitoring. This process takes longer, but it builds trust, improves outcomes, and delivers long-term value.

The graphic shows why responsible AI adoption in healthcare is not about slowing innovation. It is about making innovation work.

If you are considering an AI scribe for your practice or organization, the difference between success and failure is how it is deployed. I regularly review workflows, risks, and readiness with teams before adoption.

If you want to discuss whether an AI scribe is appropriate for your setting, we can review it together. You can book a call using my Calendly link.

Not subscribed yet?

Are you keeping up with the latest in AI for business? Our newsletter is essential reading for anyone navigating the AI landscape. It’s free, and industry leaders from top companies like Google, Hubspot, and Meta are already on board.

Interested in daily insights and practical AI tips for driving business success? Sign up for our newsletter with just one click—it's completely free!

Big News: Our Program Is Live!!!

Most AI failures in healthcare are not technical. They are implementation failures.

Become the Human in the Loop in Healthcare AI teaches clinicians, executives, and builders how to evaluate AI tools, design safe rollout strategies, and maintain accountability in real-world healthcare environments.

You will learn how to move fast where it is safe, slow down where it matters, and lead AI adoption with confidence instead of pressure.

If you work in healthcare and want to deploy AI responsibly, this course is built for you.

Check out the structured program HERE: https://www.umerkhanmd.com/buy_videoprogram

How can AI power your income?

Ready to transform artificial intelligence from a buzzword into your personal revenue generator

HubSpot’s groundbreaking guide "200+ AI-Powered Income Ideas" is your gateway to financial innovation in the digital age.

Inside you'll discover:

A curated collection of 200+ profitable opportunities spanning content creation, e-commerce, gaming, and emerging digital markets—each vetted for real-world potential

Step-by-step implementation guides designed for beginners, making AI accessible regardless of your technical background

Cutting-edge strategies aligned with current market trends, ensuring your ventures stay ahead of the curve

Download your guide today and unlock a future where artificial intelligence powers your success. Your next income stream is waiting.

1. YouTube Will Let Creators Clone Themselves With AI Shorts

YouTube is preparing to let creators generate Shorts using AI versions of their own likeness, signaling a major shift in how content is produced at scale. While the company frames AI as a creative tool rather than a replacement, it’s also racing to control low-quality AI content and protect creators from misuse.

The Details:

Creators will be able to generate Shorts using their own AI likeness, alongside tools for AI clips, stickers, music, and auto-dubbing.

YouTube says creators will retain control, with new tools to manage and restrict how their likeness is used in AI-generated content.

The platform has already deployed likeness-detection tech to prevent unauthorized AI use of creators’ faces or voices.

YouTube is strengthening systems to limit “AI slop” and repetitive content while expanding Shorts into new formats like image posts.

Why it Matters:

This move accelerates the shift toward synthetic creators, where identity becomes a reusable digital asset. For creators, AI likeness tools could unlock massive scalability without burnout. For platforms, the challenge is preserving authenticity and trust as feeds fill with AI-generated faces. How YouTube balances creativity, consent, and content quality may set the standard for the next era of creator economies.

Find out more about it here: https://techcrunch.com/2026/01/21/youtube-will-soon-let-creators-make-shorts-with-their-own-ai-likeness/

2. Why Meta Just Shut Off AI Characters for Teens Worldwide

Meta has paused teen access to its AI characters across all apps while it rebuilds the experience with stronger parental controls. The move comes amid mounting legal and regulatory pressure over teen safety and AI-driven interactions.

The Details:

Teens will no longer be able to access AI characters globally until Meta releases an updated version with built-in parental controls.

The pause applies both to users who self-identify as teens and accounts flagged by Meta’s age-prediction systems.

Meta says parents want greater transparency and control over how teens interact with AI-powered characters.

The updated AI characters will offer age-appropriate responses and focus on topics like education, sports, and hobbies.

Why it Matters:

Teen safety is becoming the frontline issue in AI regulation, forcing platforms to act before courts and lawmakers do it for them. Meta’s decision signals a shift from experimenting in public to locking things down under legal pressure. How well these safeguards actually work may determine whether AI companions survive in youth-facing platforms at all.

Find out more about it here: https://techcrunch.com/2026/01/23/meta-pauses-teen-access-to-ai-characters-ahead-of-new-version/

3. Apple’s Siri Is About to Get a Gemini Brain

Apple is reportedly preparing to unveil a major Siri upgrade powered by Google’s Gemini AI models in February. The update would mark Apple’s first meaningful step toward delivering the personalized, task-completing AI assistant it promised last year.

The Details:

The new Siri will reportedly use Google’s Gemini models to understand on-screen content and access personal data to complete tasks.

This February update is expected to precede an even larger, more conversational Siri overhaul planned for Apple’s June developer conference.

The later version could run directly on Google’s cloud infrastructure and behave more like modern chatbots.

The move follows internal struggles within Apple’s AI team and signals a strategic reset after leadership changes.

Why it Matters:

Apple’s decision to lean on Google for core AI capabilities reflects how hard it is to compete at the frontier of generative AI. If successful, this partnership could redefine Siri’s role from a passive assistant to an active digital agent. But it also raises questions about Apple’s long-term AI independence — and whether the company can maintain its privacy-first image while relying on external AI infrastructure.

Find out more about it here: https://techcrunch.com/2026/01/25/apple-will-reportedly-unveil-its-gemini-powered-siri-assistant-in-february/

Stay tuned for more updates in our next newsletter!

Top 5 AI Tools That Support Safe and Responsible AI Rollout in Healthcare

1. ValidMind

ValidMind is built specifically for AI model risk management and validation. It helps teams document model assumptions, testing results, bias analysis, and ongoing monitoring. Ideal for healthcare organizations that need structured validation and governance before scaling AI systems.

2. Arize AI

Arize AI focuses on monitoring model performance after deployment. It helps detect drift, bias, and performance degradation over time. This is critical for healthcare environments where models must remain safe and reliable long after rollout.

3. WhyLabs

WhyLabs provides continuous AI observability and anomaly detection. It flags unexpected behavior in data and models before issues reach patients or clinicians. Useful for organizations serious about post-deployment surveillance.

4. Fiddler AI

Fiddler AI specializes in explainability and transparency for AI models. It helps teams understand why models make certain predictions, which is essential for clinician trust, regulatory review, and ethical oversight in healthcare.

5. Notion AI

Notion AI supports the non-technical side of safe AI rollout. Teams use it to manage rollout phases, document governance decisions, track feedback from pilots, and maintain institutional memory throughout the AI lifecycle.

When AI is wrong in healthcare,

we call it an “edge case.”

When humans are wrong,

it’s called malpractice.

That difference matters.

Because “edge cases” aren’t rare in medicine.

They’re the norm.

- Complex patients.

- Incomplete data.

- Human nuance.

- Time pressure.

Yet we excuse AI errors as statistical noise.

While holding clinicians to absolute standards.

That’s a dangerous asymmetry.

AI doesn’t carry liability.

Clinicians do.

AI doesn’t feel regret.

Humans do.

So when AI makes a mistake,

Who absorbs the risk?

Not the model. Not the vendor.

The clinician.

That’s why AI can assist, but never own decisions.

And why “human-in-the-loop” isn’t a slogan.

It’s a safety requirement.

If we want AI in healthcare to scale responsibly,

We need aligned accountability, not double standards.

Learn AI in 5 minutes a day

What’s the secret to staying ahead of the curve in the world of AI? Information. Luckily, you can join 2,000,000+ early adopters reading The Rundown AI — the free newsletter that makes you smarter on AI with just a 5-minute read per day.

Want to work with Me? Here’s how:

I help companies with AI integration and with other technology and development requirements. Book a Strategy Call. (https://calendly.com/dr-umerkhan/available-for-meeting)

Promote Your Product: I’ll share your product with my 15k followers on LinkedIn. Reply “promo” if interested.

If you enjoyed this newsletter, please forward it to your friends and colleagues.

Follow me on LinkedIn, Youtube, and X/Twitter to see my latest content.

My Latest LinkedIn Posts

Download the printable PDF…

Use this one-page checklist to guide safer AI rollout decisions in healthcare settings.

|

—

Stay Tuned

Stay tuned for more updates on AI trends, tools, and insights in our next newsletter.

Reply